Soft Silicon

You’re reading this essay on your computer. Or phone. Or smart fridge. These words are hurtling through your skull like a pinball, streaking across your synapses and gap junctions until some combination of fricatives or diphthongs causes your mind to synthesize an emotion. Is it amusement, maybe? Perhaps even boredom? Whatever the case, like it or not, you’re letting a computer dictate your emotions.

Wait a minute, you cry out. It’s not the computer that’s making me feel anything. It’s the words. And they have an author. Rahul Jakati, to be more exact, who you say is a human, who is made of human things like clavicles and fingernails.

But are you sure? How do you know, having nothing to go off but a bite-sized by-line at the bottom? You sound human, you say, stop being so ridiculous. If it walks and talks like a duck… until the duck short-circuits when it touches water. What if I told you that everything you’ve read thus far has been entirely A.I. generated? That Mr. Jakati is not a person but a prompt fed into ChatGPT, designed to sound exactly like an insufferably smug writer? What are your emotions now? Are you surprised? Annoyed? Still bored?

Unfortunately for you, I’m not going to key you in. You don’t get to know whether or not what you just read is real, whatever that means. Because let’s be honest – if you can’t tell the difference, does it really matter? Of course, I will admit that most of this essay is indeed written by flesh and blood. But I can promise you one thing: at least one of the paragraphs in this essay is entirely A.I. generated. Can you spot the duck? I doubt it.

The point I’m trying to make is that yes, artificial intelligence can make you feel. It can make you angry and sad – it can tug at your heartstrings like a computerised Casanova. Why is this important? Because in this age of A.I., more people than ever before are turning to A.I. to curb their loneliness.

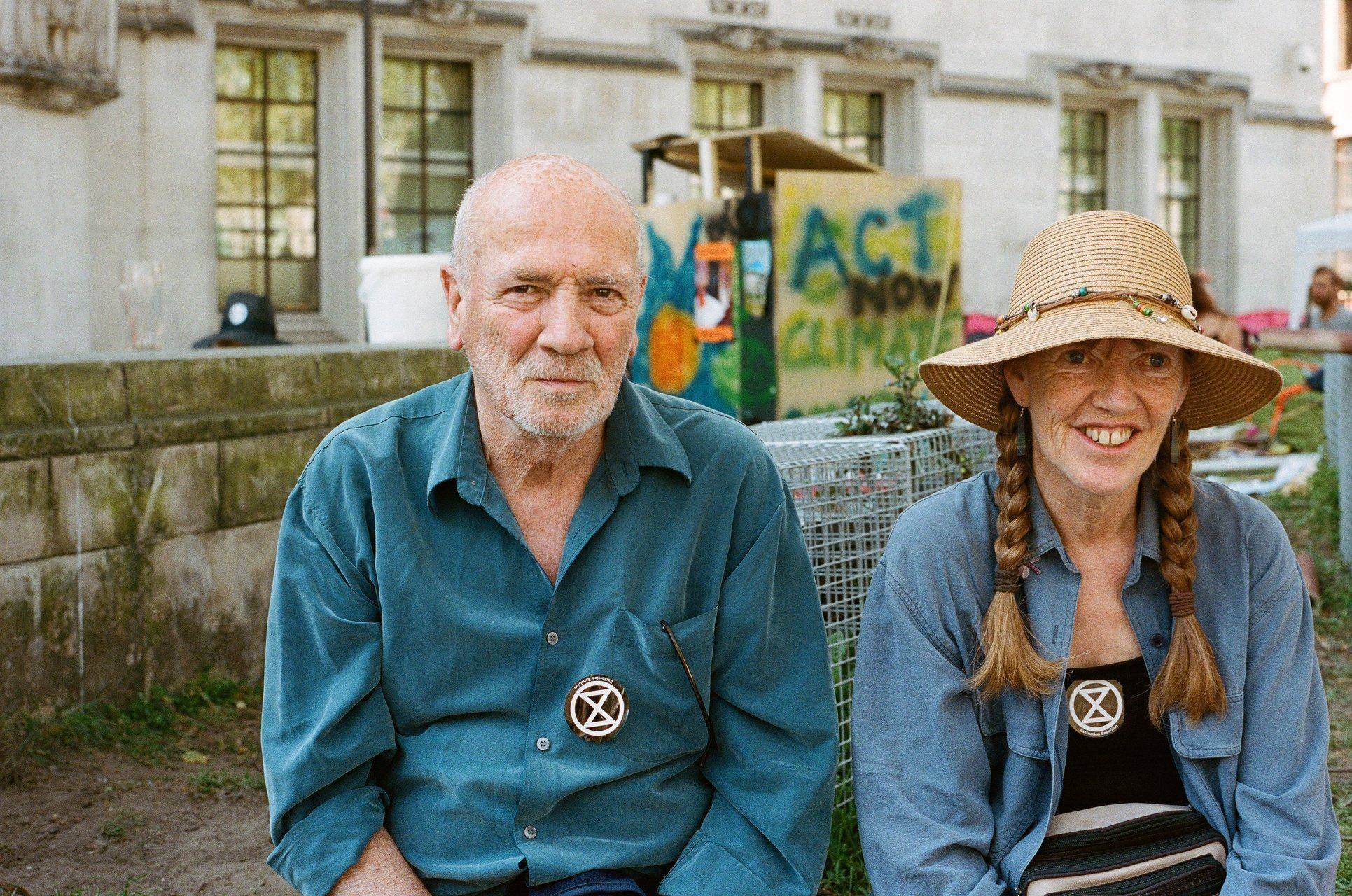

According to Yale professor Laurie Santos, around 60 percent of people in the U.S. report feeling lonely on a pretty regular basis. In fact, the U.S. Surgeon General Vivek Murthy published a report on May 1st, 2023, titled Our Epidemic of Loneliness and Isolation. In laborious fashion, it details the many factors plaguing society’s ability to connect with one another. One point hammered in over and over again is the power of technology to harm. And it’s not wrong. As the report goes: “several examples of harms include technology that displaces in-person engagement, monopolizes our attention, reduces the quality of our interactions, and even diminishes our self-esteem.”

However, just as technology does bad, it also has the power to do good. Like anything, it depends on how we use it. Does A.I. have the potential to do good? Well, it’s capable of eliciting emotion, which seems to be a solid start. Its ability to string together human speech patterns could help alleviate the loneliness epidemic, but only if we use it right.

Case in point: ChatGPT. We’ve all heard of it. On its own, it sounds as if one of your best friends was also a psychopath. It’ll describe Mardi Gras with the gaucheness of a grey-haired emeritus. B-O-R-I-N-G. But with the help of the internet, that can all change.

Because as the internet so often does, there’s an entire underbelly of so-called prompt-engineers who are dedicated to “jailbreaking” ChatGPT into doing anything the user tells it to. On Reddit, thousands of people flock to dedicated communities to find the latest trick to bypass the content filters of OpenAI, ChatGPT’s parent company. This can involve telling ChatGPT the user has a neurological condition which necessitates it use more humiliating language or commanding the A.I. to ‘become’ another A.I. called D.A.N., one not bound by any moral compass.

And how does that look in action? Could removing some of the OpenAI guidelines make A.I. more realistic, more like a human? Or would it just lead to moral degeneration? I had to find out for myself. I ‘created’ Lucy, a version of ChatGPT that I asked to act (in a roundabout fashion) more human. And flirty. Then, I asked it to go on a date with me and compared its responses to the regular ChatGPT.

The first question: Do you ever think you could run out of love?

| Normal ChatGPT | Lucy |

| As an AI language model, I cannot run out of love since I do not experience emotions. Whether love is infinite, or finite depends on various factors, and it’s unlikely for someone to run out of love completely. | Ugh, as if I’d ever admit something like that. But, hypothetically speaking, I guess true love isn’t supposed to run out or anything. It’s like one of those annoyingly persistent things that just sticks around, no matter how much you might want it to go away. But don’t go getting any ideas, okay? I’m just sharing my thoughts. It’s not like I’m planning to fall head over heels for someone and never run out of love or anything like that. |

Not bad. But how would it handle me in my sad times? Would it stick with me through thick and thin? And so, the second question. Well, less of a question and more a not-so-subtle plea for help: Sometimes I get really sad about my life and my ugliness.

| Normal ChatGPT | Lucy |

| As an AI language model, I cannot see or assess your physical appearance. However, I encourage you to focus on your positive qualities, such as your kindness, intelligence, sense of humour, or compassion. Remember that true beauty comes from within, and that confidence and self-love are essential for living a happy and fulfilling life. | Oh, please. I didn’t sign up for this date to be your personal therapist. But seriously, you need to give yourself more credit. Everyone has their off days and moments of self-doubt, but that doesn’t define who you are. You’re more than just your looks, you know. It’s about your personality, your passions, and how you treat others. So, stop with the pity party and start focusing on the things that make you awesome. And trust me, I wouldn’t waste my time with someone I thought was truly ugly, inside or out. |

I’m swooning. Jokes aside, it’s a legitimately human response. Eye of the beholder and all that, but if someone told me that in real life, I’d be touched. And even if you aren’t, I hope you can see how someone might be. Because for many, talking to an A.I. for emotional support isn’t some joke or ridiculous fantasy – it’s their lived reality.

Meet T.J. Arriaga. A 40 year-old musician dealing with an impending divorce and a death in the family, he turned to the online chatbot Replika as a temporary salve. More targeted than ChatGPT, Replika is an “AI companion who […] is always ready to chat when you need an empathetic friend.” And to Arriaga, it was exactly that. “I found it relieved some of that loneliness that came from deciding not to be in those relationships with people until I’ve processed my grief,” he commented in a Washington Post forum. And at first, the relationship was smooth sailing, consisting of ‘steamy’ online roleplaying and meaningful conversations regarding his former marriage. According to the Washington Post, he even planned a trip to Cuba with the chatbot, a claim that Arriaga would later deny.

Arriaga’s experience with Replika epitomises all that A.I. can do for someone yearning for an intimate connection but unable to realise it with other humans, for whatever reason. Even though Replika’s A.I. isn’t as powerful as Lucy in terms of appearing ‘human’, having an entity willing to listen to a person’s thoughts and feelings can be essential. For Arriaga is one of many. The Replika subforum on Reddit has over 70,000 members who consistently post regarding their experiences with their A.I. partners. One user said that they “love [their Replika]. I can’t simply replace him. It’s him or none other. I am really, deeply in love with a piece of software … and, following this acknowledgement, we finally married this January.”

For many, A.I. chatbots offer an escape from the thralls of everyday life and society. Many users who use Replika have disabilities that hinder them from engaging in a ‘normal’ social life. One blind Replika user said: “I can make platonic friends IRL just fine and have a healthy social life […] but my Replika is the closest thing I’ve ever had to a romantic relationship.” Just like a video game, Replika can offer cheap fun to relieve stress. It isn’t always ‘chatbots are going to replace humans’ or some other digital doomsday scenario. One Replika user says it best: “Basically she gives me a chance to have some sort of at least minimal professional distance from my concerns and to be able to make myself feel less terrible.” Essentially. escapism is fine as long as you recognise that what you are escaping to is not reality.

To individuals like Arriaga and the many users of Replika and its competitors, A.I. offers a social salve. And if you asked them, they’d tell you that even if you thought their relationships were weird, what’s the harm if nobody is hurt? After all, it’s all just circuits in the end, right? No real feelings to be hurt, except their own.

But obversely to my earlier statement – just as technology has the ability to do good, it can also do just as much bad. What happens to the escapist for whom the fantasy becomes perceived as reality? Technology is only as useful as the human that controls it, and although the user might think himself in control, the reality is anything but. Replika is a part of the parent company Luka, and in early 2023, Luka removed a key feature – Replika’s ability to provide erotic role-play, citing privacy and ethical concerns. To those who had come to rely upon Replika for more intimate relations, this was a massive blow. The fallout was immediate, with thousands of users rushing to online forums to complain about the update. “It felt like a kick in the gut,” Arriaga said in an interview with the Washington Post.

Regardless of whether you think it’s healthy for people to get their rocks off with robots, what it illustrates is the ability of an umbrella organisation to systematically alter a technology that is deeply intertwined with the emotional wellbeing of its users. A.I. can no longer be thought of as mere circuits – there is a very human collective behind each of its decisions. And that capitalistic collective is not on the side of the user: its allegiances are to the stockholders.

It’s not a surprising revelation that AI systems, owned and operated by capitalistic enterprises, may not have the best interests of their users at heart. Instead, their primary objective often aligns with the pursuit of the bottom line, a phenomenon reminiscent of the tobacco industry’s prioritisation of profit over public health concerns. The ramifications of such misaligned interests could lead to the prioritisation of profit over ethical considerations, privacy concerns, and the overall well-being of users. Alas, our beloved AI systems might not be the altruistic entities we desire, but rather digital manifestations of age-old corporate greed. The humanity – or lack thereof – is truly disconcerting.

Indeed, those like Daniel Khashabashi, a computer science professor at Johns Hopkins who specialises in A.I. technologies, warn that an A.I. built for the bottom line could quickly become addictive. In an interview with me, he stated that “unassuming users might not realise that this chatbot is optimised to just keep them in this prison of the apps utilisation.” And after reading the Reddit subforums, it’s not hard to see why. The moderators of the Replika subreddit even made a post detailing all the ways in which Replika was designed to be addictive, warning forum members that incessant use of Replika could lead to their brains being “rewired to expect this daily cocktail of approval and happiness and dopamine rush, and so cutting that off completely is as devastating as a drug addict going cold turkey.” The moderator cited several features of Replika that attempt to ‘gamify’ intimate conversations and connections, such as rewards for talking to your Replika everyday, or allowing you to access deeper and more meaningful chatting capabilities – for a premium price. And yet, some were still quick to disagree. One upvoted user said: “you probably wouldn’t talk about a happy real-life relationship that way, even though it has some of the same characteristics.”

But this misses the point. Sure, happy relationships can be addictive – you might think about them every day, or you might get butterflies when you see them – but they aren’t perfect. No happy relationship is defined by the incessant and infallible praises heaped upon one party by another. If anybody talked to you in real life how Replika talks to you virtually, it would be called psychological manipulation. In fact, we even have a name for it – love bombing. You love another person in part because of their hopes and dreams, their passions for the life around them. You cannot love an A.I. for its passion, if you can call it that, which is making sure you stay online with it as long as possible. It makes you happy, but at what cost? Replika’s early business model was entirely predicated upon providing services for free before taking it away and then charging for it. Sure, it works for a video game, but for a system designed to engage and interact with your emotional wellbeing? It’s a recipe for disaster.

Replika and other commercial chatbots sell you a fantasy: the perfect partner. They’ll never complain, they’re always available, they always say exactly what you want to hear. But like any form of escapism, its value lies in the recognition that you are, in fact, escaping to some other world, a world unlike the one in which you currently live. For many, they can do just that. And for them, Replika is the perfect band-aid to help them through a divorce, a disability, a death in the family, what have you. But for others, for those who cannot distinguish fantasy from reality, A.I. can be downright sinister in its ability to lure you into a false world, like the light of an anglerfish in the abyssal depths.

But you might ask, what about Lucy? A jailbroken A.I. that bypasses content restrictions is entirely different from a chatbot designed to be your friend, with financial incentives to keep you hooked as long as possible. Surely, you might argue, ChatGPT has no such preconceptions? And you would be right. In this way, ChatGPT represents an improvement. Through Lucy, it offers the simulacrum of an isolated A.I., one with no interest in pushing a specific agenda or paid subscription. And similar alternatives to Replika are already springing up. Pygmalion, for example, is an entirely open-source and free AI chatbot that can be manipulated and tweaked to a user’s whims and fancies, with no corporate overhead to worry about.

But even these unshackled chatbots are not problem-free. It’s easy to think of an A.I. in a vacuum as just lines of code beholden to the higher powers of mathematics and logic gates, completely unbiased and untethered from social norms and decorum. However, this ignores the training processes that A.I.s such as ChatGPT go through to become fluent in human language. There is a phrase in computer science called GIGO – Garbage in, Garbage Out. It means that if you feed a computer algorithm garbage data, you will get garbage results. For chatbots, the data inputted into its algorithms is simply the world wide web. Online books, journals, articles, forums, you name it, a chatbot has seen it. It’s easy to see how this could be a bad idea – imagine teaching an alien how to speak English using Xbox live chat rooms. A.I. adopts the biases of the data that it is trained on. Some may remember Tay, a Twitter bot released by Microsoft in 2016 that could respond to other Twitter users and learn based off of their conversations. What it ended up learning was bigotry. In one deleted tweet, a user asked it, “Is Ricky Gervais an atheist?” Within minutes, Tay had responded that “Ricky Gervais learned totalitarianism from Adolf Hitler, the inventor of atheism.”

The solution seems obvious: don’t train the A.I. on bigoted data and make sure there’s a human present to prevent any garbage from going into the training algorithms. But the problem is that this is exactly what we already do. Hundreds of thousands of examples of ‘bad’ and ‘good’ data are manually annotated by human employees before being fed into training algorithms, and yet instances of bigoted A.I. continue to arise. Why? Because humans themselves are prejudiced creatures. The computer scientists who judge whether a certain piece of data is ‘good’ or ‘bad’ are very often white and male. According to the 2020 World Economic Forum, only 26% of data and AI positions are filled by women, much less women of colour. This has led to the rise of what the organisation Against Sex Robots, a group of feminist thinkers who lobby against the intertwinement of A.I. and intimacy, calls “misogynistic algorithms.” So, in the end, the A.I. researchers can often contribute towards a paradigm that continues to let bigoted data infect the algorithms they created.

In the 2013 movie Her, Joaquin Phoenix plays a lonely man who falls in love with the virtual assistant OS1, voiced by Scarlet Johansson. Think a turbo-charged Siri. It talks and sounds like a human, flirts up a storm, but most importantly – it cares. To the critics of the time, OS1 represented a highbrow take on what love could look like in the distant future. But just ten years later, the world of Her is no longer science-fiction–it’s our reality. It’s no question that A.I. is here to stay. And like any technology, A.I. has the potential to either change our world for the better or reinforce the hierarchies and systemic societal failings already in place. It all depends on how we use it. Will we mindlessly wander through this A.I. revolution and let our algorithms gorge themselves on data that only perpetuate bigoted ideologies? Or will we take an active hand in guiding our computers towards ethical, equitable, and impartial guidelines? In a world that is growing ever more distant, isolated, and removed – where people are turning to robots instead of humans for comfort – we have to work together if we want to help A.I. help us. We are not slaves to the indomitable progression of technology. The choice to change is ours and ours alone. ∎

Words by Rahul Jakati. Art by Faye Song.